Reducing decision risk in banking with UX research

Impacts

- Sampling bias ↘

- User profile representativeness ↗

- UX research rigor ↗

- Methodology adopted by client UX team

.avif)

My Role

- Designed the experimental protocol

- Ran 100+ controlled lab sessions

- Analyzed data with SAS and statistical moderation models

- Delivered actionable, evidence-based recommendations

.avif)

Project Context

A UX study on a major banking mobile app

As part of my Master's thesis in UX, I joined a large-scale applied research project at Tech3Lab, conducted in partnership with a major Canadian financial institution. The project's goal was to improve accessibility and the overall user experience of the bank's mobile application.

For over a year, our team ran an experimental study to understand how age and digital self-efficacy influence different dimensions of user experience when completing core banking tasks.

Team and Collaboration

UX lab and banking partner

Throughout the project, I worked closely with the lab's research directors and assistants. My involvement started remotely during the exploratory phases, sharing and validating research work, then shifted to in-person during lab sessions with research assistants. The role required strong scientific discipline and clear, structured communication with a wide range of stakeholders: researchers, directors, research assistants, and the client's UX team.

Research Question

Adding nuance to age as a segmentation variable

Age is one of the most commonly used sampling variables in UX research, and for good reason. In online banking especially, it is an essential service used across a wide demographic range, and age remains a relevant proxy for capturing varied usage profiles. The question this project set out to explore was not whether age is useful, but whether it tells the full story.

A review of the literature pointed to digital self-efficacy as a variable worth examining alongside age. The research question: to what extent does age have an impact on user experience, and can digital self-efficacy act as a moderating variable to add nuance to that relationship?

The goal was to give the banking partner two things:

- Scientific evidence on the role of self-efficacy as a moderating variable

- Concrete recommendations for making sampling practices more nuanced in future studies

Process and Methods

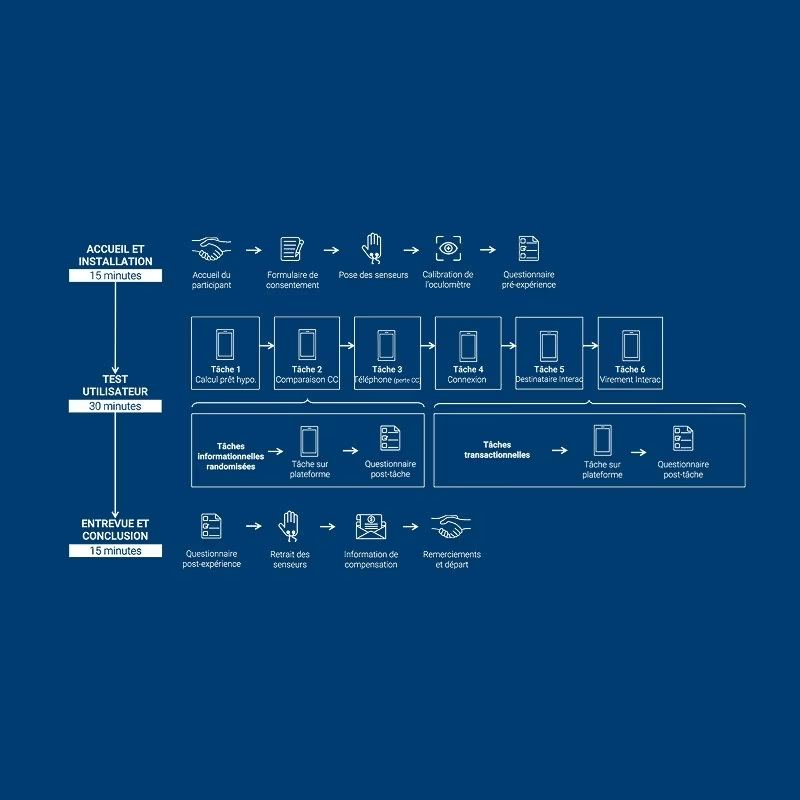

100+ controlled lab sessions

We ran a controlled experimental study combining performance measures, psychophysiological data, and psychometric assessments. Participants were recruited across a wide age range, from 18 to 80 years old, to capture meaningful variation in both age and digital self-efficacy levels.

Transactional tasks

- Log in to the mobile app

- Add an Interac recipient

- Complete an Interac transfer

Informational tasks

- Find a mortgage rate

- Compare two credit cards

- Locate the local support number

Key Decisions and Trade-offs

Choosing depth over breadth in the measurement approach

One of the core decisions in this project was committing to a multi-measure methodology, combining behavioral performance data, biometric readings, and psychometric scales. This added significant complexity to both data collection and analysis. A simpler approach would have been to rely on self-reported UX scores alone, which is far more common in applied research.

We chose the more rigorous path because the research question required it. For a credible scientific argument about self-efficacy as a moderating variable, the evidence needed to hold up to scrutiny. That meant more sessions, more setup time per participant, and a much heavier analysis phase. The year-long scope was planned from the start to give the study the statistical power it needed.

Adding nuance to age as a segmentation variable

Age was not being questioned as a useful variable. The methodological tension was more subtle: in online banking UX research, age captures real differences between users, but it may not fully explain why those differences exist. Building the case for self-efficacy as a moderating variable required a solid literature foundation first, then empirical demonstration that self-efficacy explained variance that age alone left unaccounted for.

The recommendation to the partner was not to abandon age as a variable, but to couple it with digital self-efficacy and mobile usage frequency at recruitment. That combination gives a richer, more representative sample without making the recruiting process significantly more complex.

Results

Hypotheses supported, actionable recommendations delivered

The hypothesis was supported: digital self-efficacy significantly moderates the relationship between age and several dimensions of user experience. Age does influence certain UX measures such as performance and cognitive load, but on its own it does not capture the real diversity of user profiles.

Other key findings

- Strong correlation between mobile phone usage frequency and digital self-efficacy

- A single question about usage frequency is sufficient to reliably predict self-efficacy level

Strategic recommendation to the partner

Couple age with digital self-efficacy and mobile usage frequency at the recruitment stage. This produces richer participant profiles, reduces sampling bias, and increases the reliability of future UX studies.

The banking partner's UX team found the recommendations compelling enough to integrate them directly into their internal research process. The methodology was adopted, not just noted.

Conclusion and Learnings

Rigorous science in a business context

This project sharpened my ability to operate at the intersection of academic research and industry constraints. Working within a real partnership meant the findings had to be both scientifically sound and immediately usable by a product team.

- Running a complete scientific process end-to-end in an industrial setting

- Building expertise in advanced quantitative analysis using SAS and statistical moderation models

- Conducting complex usability testing involving eye-tracking and biometric equipment

- Collaborating with diverse stakeholders across research and product functions

- Structuring and presenting findings in a way that drives real decisions, not just reports